Wires the React Native app to the vineye-admin backend so user accounts

and scans flow into the admin panel, and so a ban applied via the panel

takes effect on the device on the next app boot (or sooner on any

authenticated request).

Core

- Install expo-secure-store for storing the better-auth session token.

Falls back to AsyncStorage on web/Expo Go where SecureStore is unavailable.

- New tokenStorage service with saveToken/getToken/removeToken and a

stable per-install getDeviceId() (used to derive the deterministic

password the backend signs sign-up/sign-in with).

- Extend the API client with apiPost(), automatic Bearer header attach,

and a tiny pub/sub (authEvents) that emits 'unauthorized' on 401 and

'banned' on 403 with banned: true. Handlers are global so any request

can trigger logout or open the BannedModal.

Auth

- New services/api/auth.ts: syncUser (POST /auth/sync), fetchMe (GET

/auth/me), signOutServer (POST /auth/sign-out, best-effort).

- types/auth.User now carries optional banned/bannedReason/role/xp/level

hydrated from the backend.

- AuthContext.login is now async vs the backend; the server-side id

replaces any locally-generated UUID so mobile and admin agree on the

same User row. Hydration is optimistic from AsyncStorage (never blocks

the splash on the network) and a background fetchMe picks up server

ban changes. logout/resetAccount best-effort revoke the server session.

- AuthChoiceScreen surfaces sign-up failures through a toast instead of

silently dropping the user into the app with no account.

Ban UX

- BannedModal: non-dismissible Tailwind modal with the bannedReason

interpolated and a CTA that calls resetAccount. Mounted globally in

RootNavigator and toggled by isBanned from AuthContext.

- Banned state is persisted alongside the User in AsyncStorage so the

modal stays visible across restarts even when /auth/me is unreachable.

Scan sync

- New services/api/scans.pushScan() that maps the mobile ScanRecord to

the backend body: confidence /100 (0-100 → 0-1 backend), diseaseSlug

passthrough (server resolves to diseaseId), latitude/longitude direct,

imageUrl always null (V1 keeps photos local-only), customName dropped

(no column server-side, stays in AsyncStorage).

- useHistory.addScan now fires pushScan after the local save and ignores

failures so the app stays usable offline.

i18n

- New keys auth.errors.network/signupFailed and auth.banned.{title,

description,descriptionNoReason,cta}. The fr/en files also include

scanner gallery placeholder keys from an adjacent feature WIP — not

part of this commit's scope but bundled here to avoid splitting a

small JSON.

Co-Authored-By: Claude Opus 4.7 (1M context) <noreply@anthropic.com>

|

||

|---|---|---|

| .claude | ||

| docs | ||

| venv | ||

| VinEye | ||

| vineye-admin | ||

| .gitignore | ||

| AGENTS.md | ||

| CLAUDE.md | ||

| package.json | ||

| pnpm-lock.yaml | ||

| PROJECT_SUMMARY.md | ||

| README.md | ||

Tensorflow Grapevine Disease Detection

Description

This project develops a mobile application for detecting diseases on grapevines using a Deep Learning model. The implementation leverages TensorFlow and Keras to build a CNN-based classifier for identifying three common diseases: Black Rot, ESA (Net Blight), and Leaf Blight.

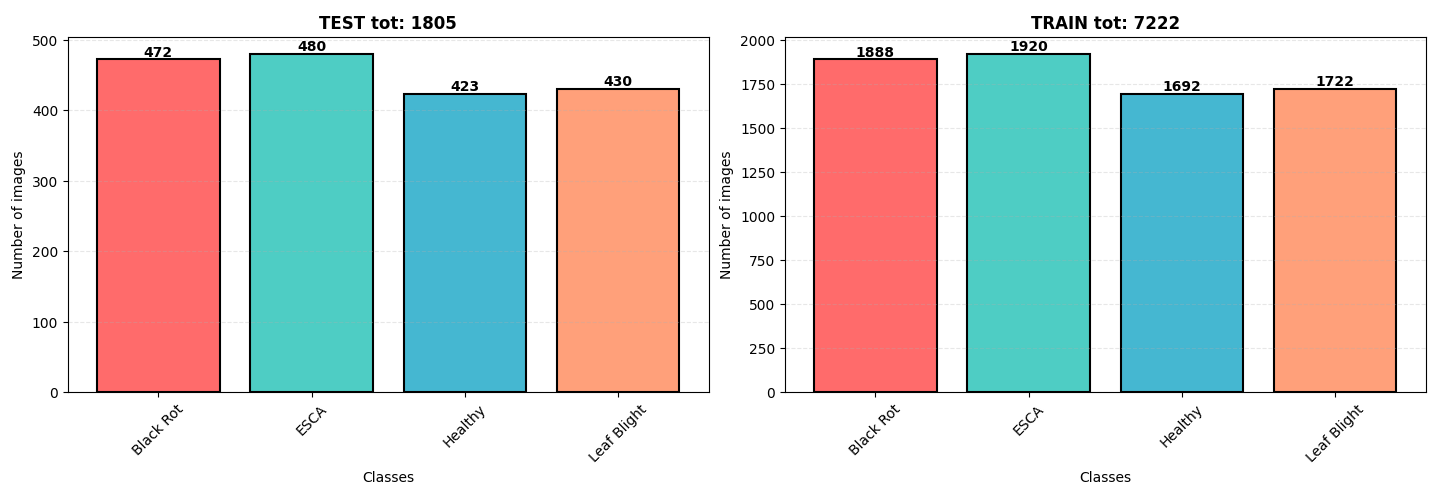

📁 Dataset

The dataset originates from Kaggle, containing 9,027 images of grapevine leaves. The diseases are categorized as:

- Black Rot

- ESCA (Net Blight)

- Leaf Blight

The dataset is well-balanced with a slight overrepresentation of ESCA and Black Rot. All images are in .jpeg format with dimensions 256x256 pixels.

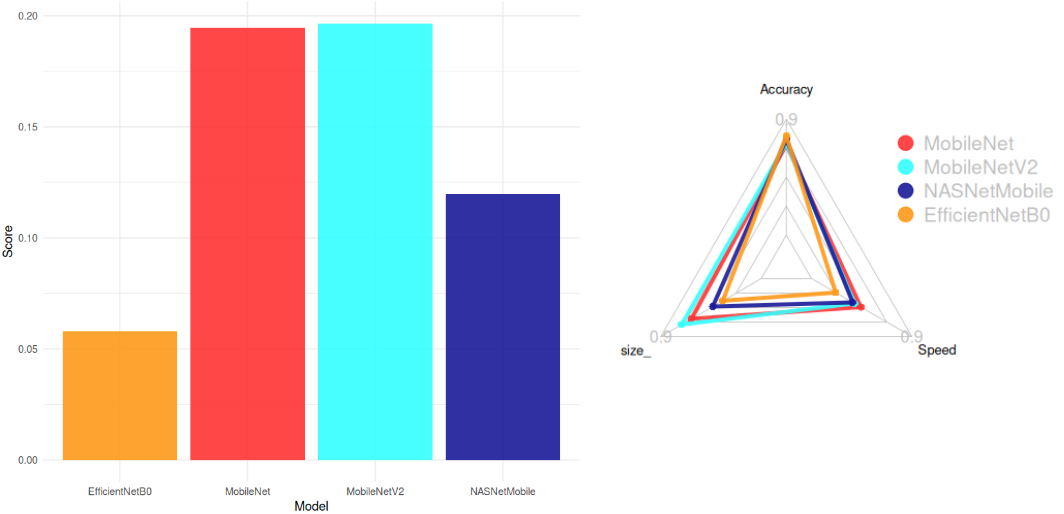

📊 Model Architecture Selection

We evaluated pre-trained models from keras applications to balance accuracy, model size, and inference speed. The selection criteria included:

- Maximize accuracy

- Minimize size (1/size)

- Maximize CPU speed (1/CPU Time)

The score formula used for selection was:

Score = \frac{Accuracy}{Size . CPU Time}

Top Models (Score > 0.05):

- MobileNetV2 (Smallest: 14 MB, High Accuracy: 77%)

- MobileNet (Fastest: 22.6 ms)

- NASNetMobile

- EfficientNetB0

Conclusion:

MobileNetV2 was chosen for its optimal balance between accuracy, size, and speed.

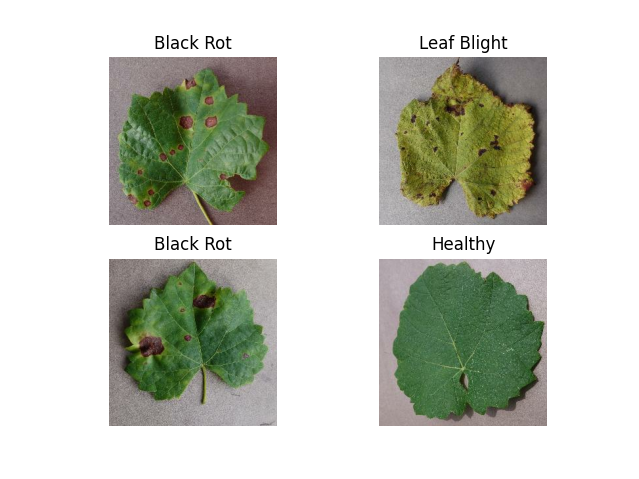

🍇 Grapevine Diseases

Key Diseases:

- Black Rot

- ESCA (Net Blight)

- Leaf Blight

🤖 Model Structure

Architecture:

# Auto Stop

early_stopping = EarlyStopping(monitor="val_loss", min_delta=0.2, patience=10)

# Model

model = Sequential()

model.add(tf.keras.applications.MobileNetV2(

input_shape=(IMG_HEIGHT, IMG_WIDTH, CHANNELS),

include_top=False,

weights='imagenet'

))

model.add(tf.keras.layers.GlobalAveragePooling2D())

model.add(tf.keras.layers.Dense(100, activation='relu'))

model.add(tf.keras.layers.Dense(100, activation='relu'))

model.add(tf.keras.layers.Dense(NUM_CLASSES, activation='softmax'))

optimizer = tf.keras.optimizers.Adam(learning_rate=LEARNING_RATE)

model.compile(

optimizer=optimizer,

loss=tf.keras.losses.SparseCategoricalCrossentropy(from_logits=True),

metrics=['accuracy']

)

Parameters:

- Total params: 7.12M (27.17 MB)

- Trainable params: 2.36M (9.01 MB)

- Non-trainable params: 34.11K (133.25 KB)

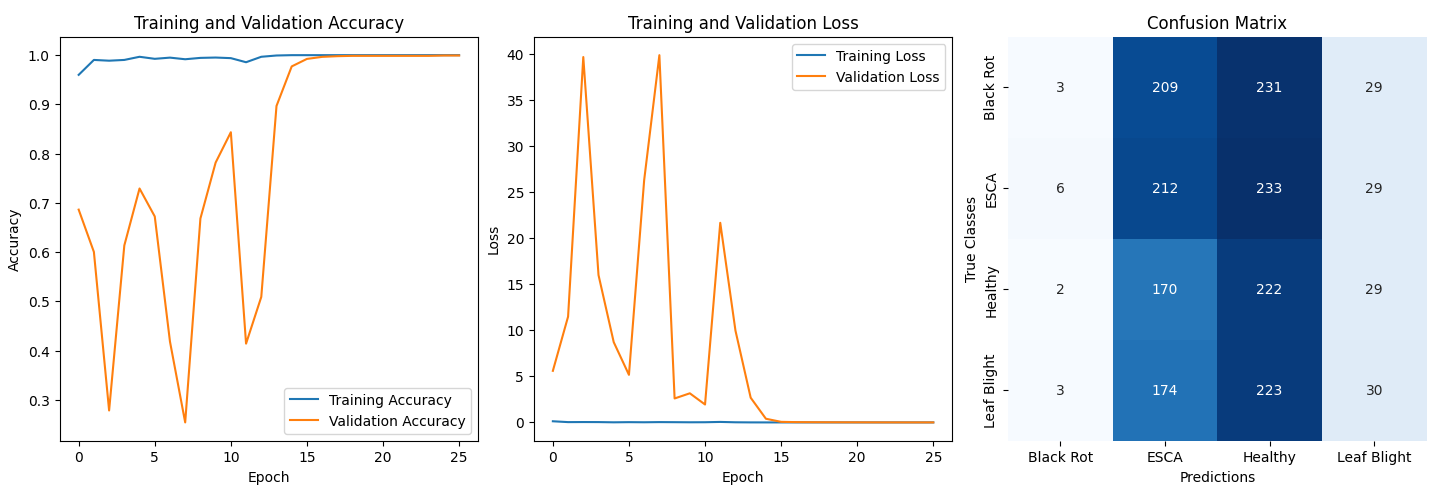

🛠️ Training Details

- Batch Size: 32

- Epochs: 100 (reduced to 25 via early stopping)

- Data Augmentation: Not used (insufficient improvement in accuracy)

- Normalization: Pixel values normalized to [0, 1]

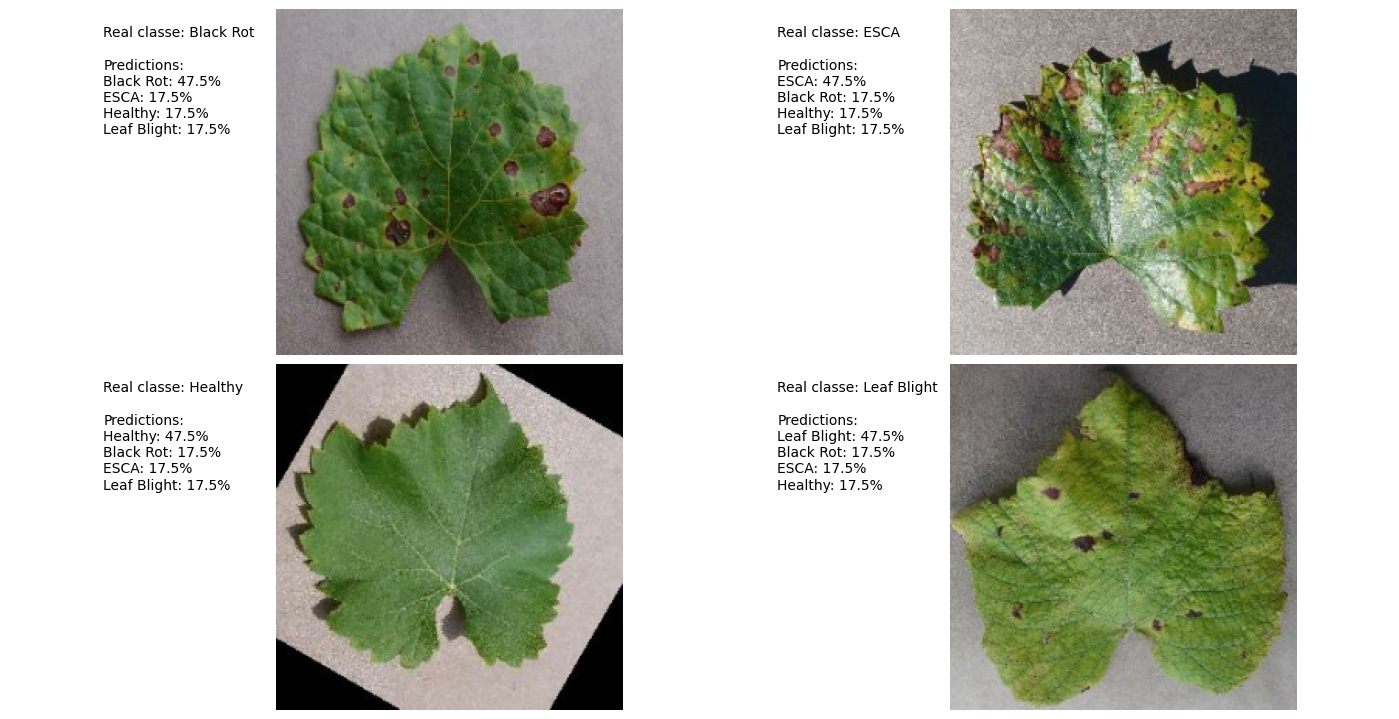

📊 Results

Performance:

- Validation Accuracy: ~99.9%

- Confusion Matrix Analysis:

- Model biased toward ESCA and Healthy classes.

- Suspected causes:

- Original dataset imbalance

- Similar visual features across diseases

Prediction Example:

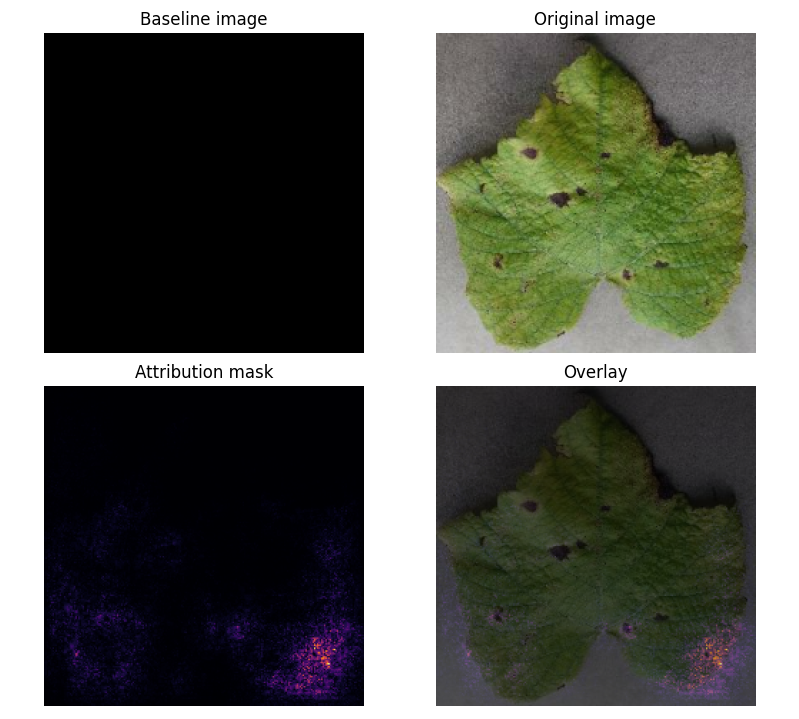

Attribution Mask:

- Key Insight: Model focuses on leaf shape rather than disease-specific features (e.g., black spots).

📚 ressources:

https://www.kaggle.com/code/ahmedmsaber/grape-leafs-diseases-mobilenetv2-val-acc-99

https://www.tensorflow.org/tutorials/images/classification?hl=en

https://www.tensorflow.org/lite/convert?hl=en

https://www.tensorflow.org/tutorials/interpretability/integrated_gradients?hl=en

🤖AI(s) : deepseek-coder:6.7b | deepseek-r1:8b